#include <iostream>

#include <opencv2/opencv_modules.hpp>

#ifdef HAVE_OPENCV_VIZ

#endif

static const char* keys =

{ "{@images_list | | Image list where the captured pattern images are saved}"

"{@calib_param_path | | Calibration_parameters }"

"{@proj_width | | The projector width used to acquire the pattern }"

"{@proj_height | | The projector height used to acquire the pattern}"

"{@white_thresh | | The white threshold height (optional)}"

"{@black_thresh | | The black threshold (optional)}" };

static void help()

{

cout << "\nThis example shows how to use the \"Structured Light module\" to decode a previously acquired gray code pattern, generating a pointcloud"

"\nCall:\n"

"./example_structured_light_pointcloud <images_list> <calib_param_path> <proj_width> <proj_height> <white_thresh> <black_thresh>\n"

<< endl;

}

static bool readStringList( const string& filename, vector<string>& l )

{

l.resize( 0 );

if( !fs.isOpened() )

{

cerr << "failed to open " << filename << endl;

return false;

}

if( n.

type() != FileNode::SEQ )

{

cerr << "cam 1 images are not a sequence! FAIL" << endl;

return false;

}

for( ; it != it_end; ++it )

{

l.push_back( ( string ) *it );

}

n = fs["cam2"];

if( n.

type() != FileNode::SEQ )

{

cerr << "cam 2 images are not a sequence! FAIL" << endl;

return false;

}

for( ; it != it_end; ++it )

{

l.push_back( ( string ) *it );

}

if( l.size() % 2 != 0 )

{

cout << "Error: the image list contains odd (non-even) number of elements\n";

return false;

}

return true;

}

int main(

int argc,

char** argv )

{

params.width = parser.get<

int>( 2 );

params.height = parser.get<

int>( 3 );

if( images_file.empty() || calib_file.empty() ||

params.width < 1 ||

params.height < 1 || argc < 5 || argc > 7 )

{

help();

return -1;

}

size_t white_thresh = 0;

size_t black_thresh = 0;

if( argc == 7 )

{

white_thresh = parser.get<unsigned>( 4 );

black_thresh = parser.get<unsigned>( 5 );

graycode->setWhiteThreshold( white_thresh );

graycode->setBlackThreshold( black_thresh );

}

vector<string> imagelist;

bool ok = readStringList( images_file, imagelist );

if( !ok || imagelist.empty() )

{

cout << "can not open " << images_file << " or the string list is empty" << endl;

help();

return -1;

}

if( !fs.isOpened() )

{

cout << "Failed to open Calibration Data File." << endl;

help();

return -1;

}

Mat cam1intrinsics, cam1distCoeffs, cam2intrinsics, cam2distCoeffs, R, T;

fs["cam1_intrinsics"] >> cam1intrinsics;

fs["cam2_intrinsics"] >> cam2intrinsics;

fs["cam1_distorsion"] >> cam1distCoeffs;

fs["cam2_distorsion"] >> cam2distCoeffs;

fs["R"] >> R;

fs["T"] >> T;

cout << "cam1intrinsics" << endl << cam1intrinsics << endl;

cout << "cam1distCoeffs" << endl << cam1distCoeffs << endl;

cout << "cam2intrinsics" << endl << cam2intrinsics << endl;

cout << "cam2distCoeffs" << endl << cam2distCoeffs << endl;

cout << "T" << endl << T << endl << "R" << endl << R << endl;

if( (!R.data) || (!T.data) || (!cam1intrinsics.

data) || (!cam2intrinsics.

data) || (!cam1distCoeffs.

data) || (!cam2distCoeffs.

data) )

{

cout << "Failed to load cameras calibration parameters" << endl;

help();

return -1;

}

size_t numberOfPatternImages = graycode->getNumberOfPatternImages();

vector<vector<Mat> > captured_pattern;

captured_pattern.resize( 2 );

captured_pattern[0].resize( numberOfPatternImages );

captured_pattern[1].resize( numberOfPatternImages );

Mat color =

imread( imagelist[numberOfPatternImages], IMREAD_COLOR );

cout << "Rectifying images..." << endl;

stereoRectify( cam1intrinsics, cam1distCoeffs, cam2intrinsics, cam2distCoeffs, imagesSize, R, T, R1, R2, P1, P2, Q, 0,

-1, imagesSize, &validRoi[0], &validRoi[1] );

Mat map1x, map1y, map2x, map2y;

for( size_t i = 0; i < numberOfPatternImages; i++ )

{

captured_pattern[0][i] =

imread( imagelist[i], IMREAD_GRAYSCALE );

captured_pattern[1][i] =

imread( imagelist[i + numberOfPatternImages + 2], IMREAD_GRAYSCALE );

if( (!captured_pattern[0][i].data) || (!captured_pattern[1][i].data) )

{

cout << "Empty images" << endl;

help();

return -1;

}

remap( captured_pattern[1][i], captured_pattern[1][i], map1x, map1y, INTER_NEAREST, BORDER_CONSTANT,

Scalar() );

remap( captured_pattern[0][i], captured_pattern[0][i], map2x, map2y, INTER_NEAREST, BORDER_CONSTANT,

Scalar() );

}

cout << "done" << endl;

vector<Mat> blackImages;

vector<Mat> whiteImages;

blackImages.resize( 2 );

whiteImages.resize( 2 );

cvtColor( color, whiteImages[0], COLOR_RGB2GRAY );

whiteImages[1] =

imread( imagelist[2 * numberOfPatternImages + 2], IMREAD_GRAYSCALE );

blackImages[0] =

imread( imagelist[numberOfPatternImages + 1], IMREAD_GRAYSCALE );

blackImages[1] =

imread( imagelist[2 * numberOfPatternImages + 2 + 1], IMREAD_GRAYSCALE );

remap( color, color, map2x, map2y, INTER_NEAREST, BORDER_CONSTANT,

Scalar() );

remap( whiteImages[0], whiteImages[0], map2x, map2y, INTER_NEAREST, BORDER_CONSTANT,

Scalar() );

remap( whiteImages[1], whiteImages[1], map1x, map1y, INTER_NEAREST, BORDER_CONSTANT,

Scalar() );

remap( blackImages[0], blackImages[0], map2x, map2y, INTER_NEAREST, BORDER_CONSTANT,

Scalar() );

remap( blackImages[1], blackImages[1], map1x, map1y, INTER_NEAREST, BORDER_CONSTANT,

Scalar() );

cout << endl << "Decoding pattern ..." << endl;

bool decoded = graycode->decode( captured_pattern, disparityMap, blackImages, whiteImages,

structured_light::DECODE_3D_UNDERWORLD );

if( decoded )

{

cout << endl << "pattern decoded" << endl;

double min;

double max;

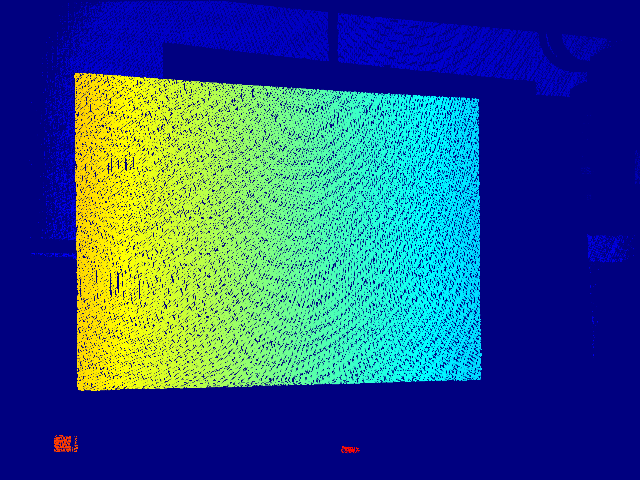

Mat cm_disp, scaledDisparityMap;

cout << "disp min " << min << endl << "disp max " << max << endl;

resize( cm_disp, cm_disp,

Size( 640, 480 ), 0, 0, INTER_LINEAR_EXACT );

imshow(

"cm disparity m", cm_disp );

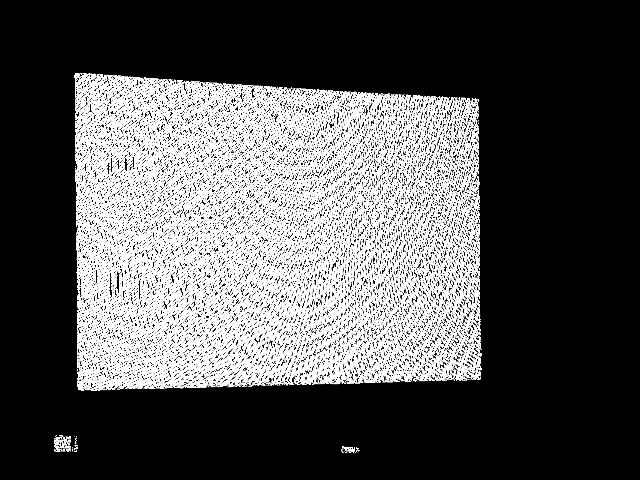

Mat dst, thresholded_disp;

threshold( scaledDisparityMap, thresholded_disp, 0, 255, THRESH_OTSU + THRESH_BINARY );

resize( thresholded_disp, dst,

Size( 640, 480 ), 0, 0, INTER_LINEAR_EXACT );

imshow(

"threshold disp otsu", dst );

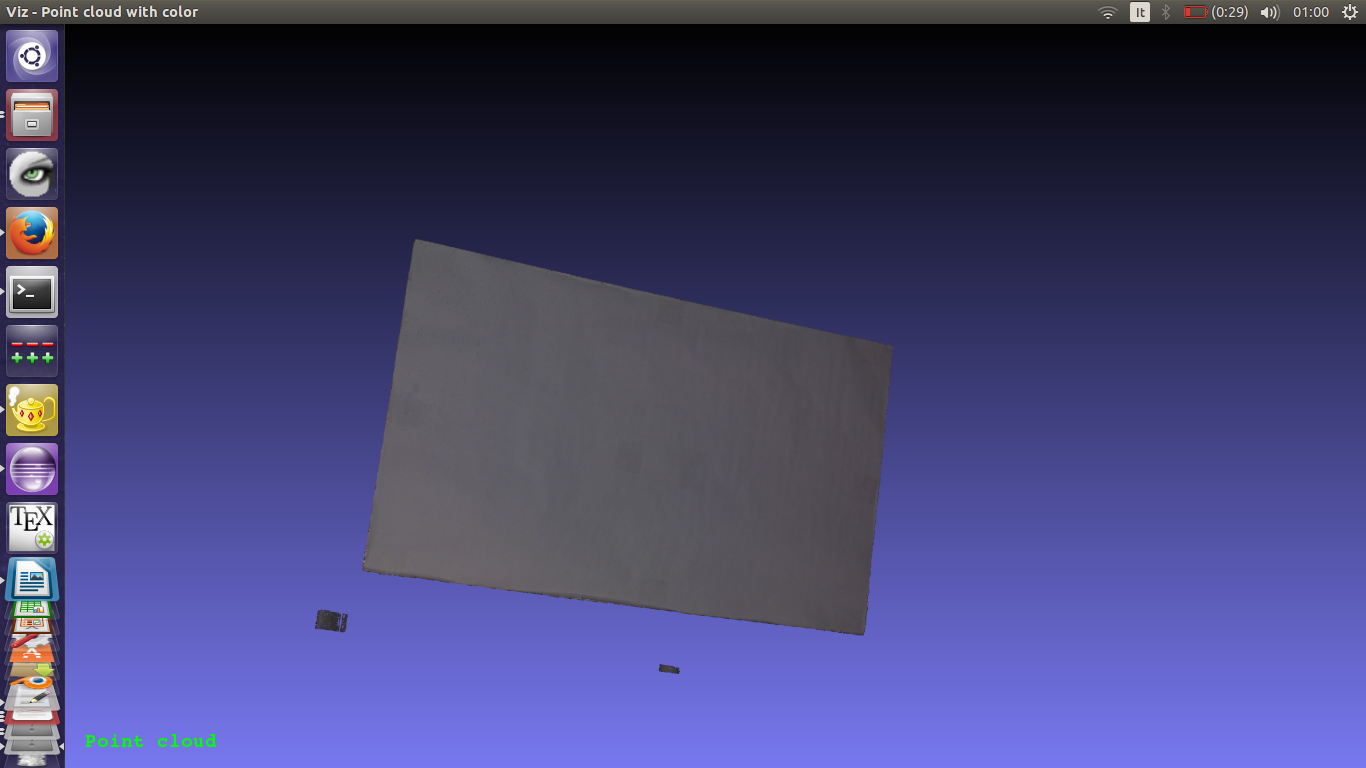

#ifdef HAVE_OPENCV_VIZ

Mat pointcloud_tresh, color_tresh;

pointcloud.

copyTo( pointcloud_tresh, thresholded_disp );

color.

copyTo( color_tresh, thresholded_disp );

myWindow.setBackgroundMeshLab();

myWindow.showWidget(

"pointcloud",

viz::WCloud( pointcloud_tresh, color_tresh ) );

myWindow.showWidget(

"text2d",

viz::WText(

"Point cloud",

Point(20, 20), 20, viz::Color::green() ) );

myWindow.spin();

#endif

}

return 0;

}

如果数组没有元素,则返回 true。

int64_t int64

用于迭代序列和映射。

定义 persistence.hpp:595

文件存储节点类。

定义 persistence.hpp:441

FileNodeIterator begin() const

返回指向第一个节点元素的迭代器

FileNodeIterator end() const

返回指向最后一个节点元素之后元素的迭代器

XML/YAML/JSON 文件存储类,封装了写入或读取所需的所有信息...

定义 persistence.hpp:261

MatSize size

定义 mat.hpp:2187

void copyTo(OutputArray m) const

将矩阵复制到另一个矩阵。

uchar * data

指向数据的指针

定义 mat.hpp:2167

void convertTo(OutputArray m, int rtype, double alpha=1, double beta=0) const

使用可选缩放将数组转换为另一种数据类型。

2D 矩形的模板类。

定义 types.hpp:444

用于指定图像或矩形大小的模板类。

Definition types.hpp:335

Viz3d 类表示一个 3D 可视化窗口。该类是隐式共享的。

定义 viz3d.hpp:68

点云.

Definition widgets.hpp:681

文本和图像控件。

定义 widgets.hpp:408

void reprojectImageTo3D(InputArray disparity, OutputArray _3dImage, InputArray Q, bool handleMissingValues=false, int ddepth=-1)

将视差图像重新投影到3D空间。

void stereoRectify(InputArray cameraMatrix1, InputArray distCoeffs1, InputArray cameraMatrix2, InputArray distCoeffs2, Size imageSize, InputArray R, InputArray T, OutputArray R1, OutputArray R2, OutputArray P1, OutputArray P2, OutputArray Q, int flags=CALIB_ZERO_DISPARITY, double alpha=-1, Size newImageSize=Size(), Rect *validPixROI1=0, Rect *validPixROI2=0)

计算校准立体相机的每个头的校正变换。

void initUndistortRectifyMap(InputArray cameraMatrix, InputArray distCoeffs, InputArray R, InputArray newCameraMatrix, Size size, int m1type, OutputArray map1, OutputArray map2)

计算去畸变和校正变换图。

void convertScaleAbs(InputArray src, OutputArray dst, double alpha=1, double beta=0)

缩放、计算绝对值,并将结果转换为 8 位。

void minMaxIdx(InputArray src, double *minVal, double *maxVal=0, int *minIdx=0, int *maxIdx=0, InputArray mask=noArray())

查找数组中的全局最小值和最大值。

std::string String

定义 cvstd.hpp:151

std::shared_ptr< _Tp > Ptr

Definition cvstd_wrapper.hpp:23

#define CV_32FC1

定义 interface.h:118

void imshow(const String &winname, InputArray mat)

在指定窗口中显示图像。

int waitKey(int delay=0)

等待按键按下。

CV_EXPORTS_W Mat imread(const String &filename, int flags=IMREAD_COLOR_BGR)

从文件加载图像。

void cvtColor(InputArray src, OutputArray dst, int code, int dstCn=0, AlgorithmHint hint=cv::ALGO_HINT_DEFAULT)

将图像从一个颜色空间转换为另一个颜色空间。

void applyColorMap(InputArray src, OutputArray dst, int colormap)

在给定图像上应用 GNU Octave/MATLAB 等效的颜色映射。

double threshold(InputArray src, OutputArray dst, double thresh, double maxval, int type)

对每个数组元素应用固定级别的阈值。

int main(int argc, char *argv[])

定义 highgui_qt.cpp:3

PyParams params(const std::string &tag, const std::string &model, const std::string &weights, const std::string &device)

StructuredLightPattern 构造函数的参数。

定义 graycodepattern.hpp:77

例如,本教程使用的数据集是使用分辨率为 1280x800 的投影仪获取的,因此使用两台相机捕获了 42 张图案图像(从 1 到 42 号)+ 1 张白色图像(43 号)和 1 张黑色图像(44 号)。

cout << "Rectifying images..." << endl;

stereoRectify( cam1intrinsics, cam1distCoeffs, cam2intrinsics, cam2distCoeffs, imagesSize, R, T, R1, R2, P1, P2, Q, 0,

-1, imagesSize, &validRoi[0], &validRoi[1] );

Mat map1x, map1y, map2x, map2y;

initUndistortRectifyMap( cam1intrinsics, cam1distCoeffs, R1, P1, imagesSize,

CV_32FC1, map1x, map1y );

initUndistortRectifyMap( cam2intrinsics, cam2distCoeffs, R2, P2, imagesSize,

CV_32FC1, map2x, map2y );

........

for( size_t i = 0; i < numberOfPatternImages; i++ )

{

........

remap( captured_pattern[1][i], captured_pattern[1][i], map1x, map1y, INTER_NEAREST, BORDER_CONSTANT,

Scalar() );

remap( captured_pattern[0][i], captured_pattern[0][i], map2x, map2y, INTER_NEAREST, BORDER_CONSTANT,

Scalar() );

}

........

remap( color, color, map2x, map2y, INTER_NEAREST, BORDER_CONSTANT,

Scalar() );

remap( whiteImages[0], whiteImages[0], map2x, map2y, INTER_NEAREST, BORDER_CONSTANT,

Scalar() );

remap( whiteImages[1], whiteImages[1], map1x, map1y, INTER_NEAREST, BORDER_CONSTANT,

Scalar() );

remap( blackImages[0], blackImages[0], map2x, map2y, INTER_NEAREST, BORDER_CONSTANT,

Scalar() );

remap( blackImages[1], blackImages[1], map1x, map1y, INTER_NEAREST, BORDER_CONSTANT,

Scalar() );

此时,可以使用 reprojectImageTo3D 方法生成点云,注意将计算出的视差转换为 CV_32FC1 Mat(decode 方法计算的是 CV_64FC1 视差图)。